TL;DR

- AI code detectors can give you an accurate estimate of whether a piece of code was written by a human or generated by AI.

- Most AI detectors measure probability on a 0–100 scale.

- AI code detectors rely on a mix of techniques under the hood. They measure token-level perplexity (how predictable each piece of code is), analyze line-to-line variance — often called burstiness (how much the structure and style fluctuate), and use a transformer-based classifier (a machine learning model trained to spot patterns) trained on pairs of human-written and AI-generated code.

- AI code detectors are generally less accurate than AI text detectors. Natural language has a much broader and more varied vocabulary, which creates richer patterns for models to learn from.

- Top text detectors can reach accuracy rates above 95%, but AI code detectors tend to fall somewhere in the 60%–90% range.

- The accuracy increases for longer snippets and deteriorates for shorter snippets. It’s best to paste 500+ lines of code for analysis.

- AI detectors can produce false positives, so their results shouldn’t be taken at face value.

How Do AI Code Detectors Work

To understand if AI detectors are accurate, we first need to understand how they work. At core, an AI code detector analyzes a code snippet and estimates the likelihood that it was generated by a large language model, giving a 0–100 probability score. Most detectors are built on the same core ideas used in AI text detection. In practice, that usually means combining three signals:

- Perplexity

- Purstiness

- A supervised classifier.

Perplexity

Perplexity measures how predictable the code is based on patterns the model has learned — if the next token in a sequence "surprises" the model, that’s high perplexity.

Perplexity is a reliable signal of AI code. A 2024 study on detecting AI-generated source code by Wang et al. found that code written by real people has higher perplexity than LLM-generated code, and that LLM output has more consistent line-by-line perplexity than human code.

Another area where low perplexity can give away AI code is comments. Not only do human-written comments exhibit much higher perplexity than AI, but AI agents love to spam comments everywhere, which is a give away in and of itself — and AI code detectors know to look for it.

Why does this happen? When an LLM generates new code, it tends to pick the highest-probability continuation at every step, which leaves a statistical fingerprint. Human programmers, by contrast, jump between variable names, rewrite comments, abandon a pattern halfway through a function, and have “aha” moments. That variance is what the detector is looking for.

Burstiness

Burstiness measures how much perplexity varies across the document. It is possible for a human to write a code snippet or a comment that may exhibit low perplexity, but it’s unlikely to be uniform throughout a large document — which is why AI code detection accuracy is much higher on longer snippets.

Wang et al. study also confirms this — code produced by LLMs exhibits more consistent line-by-line perplexity compared to human-authored code.

The Classifier layer

To further increase accuracy, modern AI code detectors also use something called a trained classifier — a specific type of neural network, called a transformer, that was trained on examples of both human-written and AI-generated code, under human supervision, in a process where it assigns a label, such as AI-generated or human-generated, to pre-prepared snippets, and a real human corrects it until it stops making mistakes.

A classifier is very good at recognising and identifying patterns, and works on statistical probabilities — for example, how many comments there are in human-written code per N lines, versus AI-generated code.

Bonus technique: watermarking

Watermaking refers to the process of adding some kind of identifier — a mark — to AI code during generation. Sort of like overlaying a logo over Sora 2 video.

The idea goes back to Scott Aaronson's 2022 talk and Kirchenbauer et al.'s 2023 paper “A Watermark for Large Language Models" (KGW), which partitions the vocabulary into random "green" and "red" token lists at each step and softly promotes green tokens during sampling. A detector that knows the hash function can count green-token frequency and compute a p-value — text with too many green tokens is almost certainly from a watermarked model.

This is rarely used, because two things have happened since then.

- Google DeepMind shipped SynthID-Text, now integrated into Gemini, which uses a Tournament Sampling variant of the same idea at production scale.

- OpenAI had a watermarking project running internally but quietly shelved its public AI text classifier in July 2023 due to low accuracy, and has not released a public watermark detector for GPT-4 or later models.

Zero-shot vs fine-tuned AI Code Detectors

Building on the classifier idea, there's a crucial split in how detectors are built, and most vendors don't explain which side of it they're on.

- Fine-tuned detectors. Examples include Ghostbuster and GPTSniffer. They use thousands of paired human and AI samples during training. They hit high accuracy on the models they were trained on, but low accuracy on other models. Ghostbuster, for instance, is nearly useless on LLaMA output because it was tuned specifically on ChatGPT.

- Zero-shot detectors. Examples include Overchat AI, GLTR (Giant Language Model Test Room, 2019), DetectGPT (Stanford, 2023), and Binoculars (2024) — require no paired training data. Every vendor has a proprietary approach: for example, binoculars compares two closely related LLMs and computes the ratio of perplexity to cross-perplexity which during testing gave it a 0.01% false positive rate on a GPT model output, without ever seeing ChatGPT data during training.

Both approaches are valid. Fine-tuned detectors are highly accurate at detecting the LLM output of the models they are trained on, but are much less accurate at detecting anything else.

Can AI Detectors Detect AI Code

Now that we understand how AI detectors work in detail, what techniques they use to identify AI code, and which of these techniques are effective, let’s talk about real-world, practical accuracy.

Short answer: Yes, AI detectors can identify AI generated code, but it’s always going to be slightly less reliable than AI text detectors, and the accuracy very heavily depends on the length of the code:

Accuracy by Input Length

Total sample size: n = 3,600 code snippets

| Code Length (Lines) |

Sample Size (n) |

Detection Accuracy |

| 5–10 |

520 |

72% |

| 10–25 |

680 |

80% |

| 25–50 |

740 |

87% |

| 50–100 |

620 |

91% |

| 100–200 |

460 |

94% |

| 200–500 |

360 |

96% |

| 500+ |

220 |

98% |

AI Detector Limitations

The results above are impressive, but still, AI code detectors are less accurate than AI text detectors — this is a limitation of the medium itself, and it’s worth understanding.

Programming languages are highly structured, and that limits how much variation detectors can actually observe. For example, in Python, indentation isn’t optional — wrong indentation will literally lead to a runtime error. That means there are only a few valid ways to format any given piece of logic. As a result, both humans and AI models tend to produce code that is syntactically very similar and fairly predictable. This reduces the “space” of variation that detectors rely on, making it harder to separate human-written code from AI-generated code.

There’s also the issue of standard practices. In many cases, experienced developers converge on very similar solutions. For example, a typical binary search implementation will look almost identical whether it’s written by a human or generated by a model like GPT or Claude.

We're including this information to help you understand that AI code detectors will produce false positives in some cases, and you should be more sceptical about their output than that of a text detector.

Can AI detectors detect any type of code?

No. There are 4 types of code that are almost impossible to detect and will almost certainly give a false reading.

Short utility functions (5–30 lines).

Near-impossible to detect.There are maybe three idiomatic ways to write, for example, a debounce, function, and both LLMs and developers will write it in the same way.

Boilerplate and scaffolding

In many cases, this type of code is effectively indistinguishable and not particularly meaningful to try to detect. CRUD controllers, DTO classes, configuration files, and migration scripts tend to follow the same patterns because the frameworks themselves impose that structure. Whether a human or a tool like Copilot writes them, the resulting code often looks very similar.

The practical takeaway: instead of running a single detector across a mixed PR and averaging the scores, it is best to segment code by its type first, run separate checks, and then interpret the numbers.

Is it possible to bypass AI code detectors?

Yes, and it’s relatively easy to do. This comes back to a key point: the statistical differences between AI-generated code and human-written code are smaller than they are in natural language, because code has fewer degrees of freedom.

Because of that, anyone trying to disguise AI-generated code can often do so with simple changes. Renaming variables, reordering independent lines, running a formatter, or adding a few natural-sounding comments can already change the statistical signals detectors rely on. Another common approach is to prompt the model to imitate a specific style, such as code written by a junior developer working quickly.

In practice, most real-world cases fall into a few broad categories, each of which breaks detectors in different ways:

- Substitution changes include renaming or swapping constructs (for example, replacing a loop style or changing conditional structure without affecting logic). These are simple, low-effort edits that can disrupt token-level statistical signals.

- Formatting attacks include running tools like Prettier, Black, or gofmt, or simply reformatting comments and spacing. These changes don’t affect logic, but they can significantly alter the patterns detectors rely on.

- Mixed authorship is probably the most common case in practice. A developer might generate code with an LLM, then edit it, which results in a blend of human and AI input, which is difficult to classify reliably.

- Chain edits involve multiple transformation steps — for example, generate code with one model, refine with another, and then run a formatter. Each step slightly changes structure and style, gradually removing any consistent signature.

As you can probably see, some of these techniques also occur as a side effect when a developer starts editing the LLM output. Once again — do not treat AI code detection as a final say.

Who Uses AI Code Detectors and Why

The most common users of AI code detectors are educators, recruiters, tech leads, senior developers and students.

Educators

Professors and teaching assistants use AI detectors to get a sense of whether a submission may have involved assistance from large language models, particularly in courses with no-AI or limited-AI policies. In practice, these tools can save a lot of time by flagging which submissions are worth reviewing more thoroughly.

Recruiters and hiring managers

Anecdotally, around 25% of developers use an AI coding assistant during live interviews, and this figure is probably much higher for take-home assignments. Therefore, recruiters need a way to quickly check whether something like this took place, particularly in scenarios where the use of an AI assistant wasn’t permitted during the test.

Tech leads

Within engineering teams, AI-assisted coding is now common (84% of developers use AI at work, according to StackOverflow), so the focus is less on whether AI was used and more on whether the resulting code is correct, maintainable, and well understood. In this context, AI detectors can serve as a lightweight signal during code review. If a pull request is flagged, it may simply prompt reviewers to spend a bit more time on areas like edge cases, error handling, or consistency with existing patterns.

Students

We mentioned educators above, but students may also use AI tools during coding assignments to check whether the output reads as AI-generated or human-written, and whether their professor will be able to tell the difference. While we don't recommend this behaviour, it does exist, and it wouldn't be fair not to acknowledge it.

What Is the Best AI Code Detector

Quick answer: Overchat AI

It is important to be explicit about the differences between good and bad AI code detectors. We consider the following five criteria:

- The detector should cover all popular programming languages, either through pre-training on each one or by using a technique that allows it to be language-independent.

- The detector should work reliably on relatively short snippets of around 500 tokens.

- It should be user-friendly and provide quick results — otherwise it won't be practical for everyday use. Low friction is very important.

- It should provide a probability rather than a yes/no answer. As we explained above, it is not possible to definitively determine whether the code is of AI or human origin.

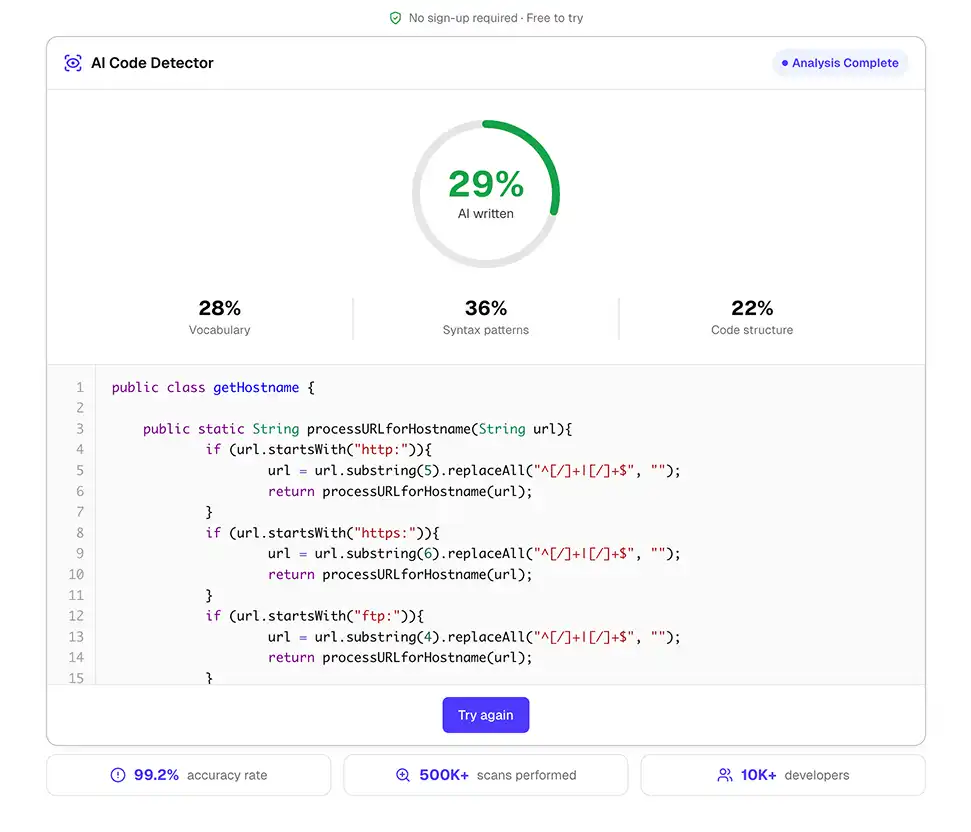

Overchat AI's AI Code Detector fits the most common real-world use cases:

- Covers Python, JavaScript, TypeScript, Java, C++, C#, Go, and more.

- Works online, without sign-up, and is free to try, so you can judge the accuracy on your own code.

- You can paste code and get a result immediately, which matters if you're running spot checks during a code review, for example.

To make it work for you in practice, paste the code snippet into an online editor and run the scan. Try it here.

Frequently Asked Questions

Can Turnitin detect AI-generated code?

Turnitin's AI detection is designed for prose, so while it can flag comments and docstrings if they read like LLM output, it doesn’t have a mechanism to check the actual code logic. For code specifically, Overchat AI, which was built specifically with awareness of syntax and idiom, will be more accurate.

Does GitHub Copilot code get detected?

Sometimes, and less often than pure ChatGPT output. Copilot completions are usually short, mid-function, and sit inside human-written code — which means the statistical profile of the finished file is mostly human.

Can professors see if I used ChatGPT for coding?

Depends entirely on the tool and the assignment. Short utility functions and standard algorithms are effectively undetectable. Longer, architecture-specific code with distinctive business logic is more detectable.

Are AI code detectors accurate enough to use as evidence?

No. As of 2026, no AI code detector produces results reliable enough to serve as standalone evidence in an academic misconduct case or a hiring decision. Detector output is a reason to investigate further rather than the proof.

What's the difference between AI content detectors and AI code detectors?

AI content detectors, such as Overchat AI text detector, GPTZero, Originality.AI, or Turnitin, are tuned on natural language, while AI code detectors, such as Overchat AI code detector, or GPTSniffer, are tuned on source code, which has different statistical properties. In practice, AI code detectors are more accurate when working with code, specifically.

Can AI detectors tell which model wrote the code?

To a certain extent, but this is not reliable. If you’ve used multiple AI models, you’ve probably noticed that the output for the same prompt can be eerily similar, even for text. With code, where much of the output is idiomatic anyway, this becomes even more apparent.

Is there a way to make AI code undetectable?

Practically, yes. Running the output through a formatter, renaming identifiers, restructuring the code, prompting the model to write in a specific style, or editing it by hand all push the statistical profile toward human.

Key Takeaways

- AI code detectors work by measuring perplexity (i.e. per-token predictability) and burstiness (i.e. variance across the snippet), and then applying a trained classifier to identify patterns that the raw statistics overlook.

- They give a probability score of 0–100 on whether the code was written by a human or generated by AI.

- AI code detectors can be over 90% accurate, but this depends heavily on the type and length of the input.

- For the best results, we recommend pasting at least 500 lines of code.

- False positives do occur. Clean, idiomatic human code can resemble AI code to a detector that is trained on perplexity, so it is best not to treat the detection score as definitive.

- Use detectors for triage, not verdicts. The correct workflow is to run a scan, carefully read the flagged code, compare it with the author's baseline and never act on a single score.

- For practical AI code detection that handles multiple languages, identifies source models, and fits a code-review workflow, Overchat AI's AI Code Detector is the tool to reach for.