What is Qwen 3.5?

Qwen 3.5 is the latest AI model from Alibaba Cloud's Qwen team, released in February 2026. Unlike its predecessor Qwen 3, it features native multimodal capabilities — meaning it can understand text, images, and video all within a single system. In its most powerful configuration, it has 397 billion parameters. Qwen 3.5 is the most downloaded open-source model family on Hugging Face, surpassing all competitors combined. It competes directly with DeepSeek V3.2 and, in terms of benchmark performance, matches GPT-5.2, Claude Opus 4.5, and Gemini 3 Pro.

Alibaba Cloud is the cloud computing division of Alibaba Group, one of China's largest technology conglomerates. By December 2025, Qwen's downloads on Hugging Face exceeded all competing major open-source models combined, making the Qwen team one of the most trusted and popular developers of open-source AI.

What can you use the Qwen 3.5 model for? Thanks to its multimodal design, Qwen 3.5 is great for a wide range of tasks: writing, research, analyzing images and documents, answering questions in virtually any language, solving homework, and everyday conversations. It handles creative and analytical tasks equally well, making it a strong all-rounder for personal use.

Qwen 3.5 Architecture & Models

The Qwen 3.5 series includes four open-source model variants — the flagship 397B-A17B, a mid-size 122B-A10B, a compact 35B-A3B, and a dense 27B model. Most use a MoE (Mixture-of-Experts) architecture that activates only a small fraction of total parameters per prompt, delivering powerful performance at significantly lower computational cost.

The biggest leap in Qwen 3.5 is its native multimodal design, which lets it understand text, images, and video within a single conversation — no separate models needed. It also retains the hybrid thinking mode from Qwen 3, and now supports 201 languages and dialects (up from 82 in Qwen 3), including Hawaiian and Fijian — making it one of the most versatile multilingual AI models available today.

Qwen 3.5 is released as open-weight, and all four model variants are available for download on Hugging Face and Alibaba's ModelScope. There is also a closed-source Qwen-3.5-Plus version with a 1 million token context window, accessible via Alibaba's Model Studio platform.

Qwen 3.5 also introduces visual agentic capabilities — meaning the model can interpret what's on your screen and help you complete tasks across apps. For everyday users, this translates to a smarter assistant that can look at screenshots, read documents, and guide you step by step. Combined with its massive efficiency improvements (60% cheaper and 8x faster throughput than Qwen 3), it sets a new benchmark for what open-source AI can do.

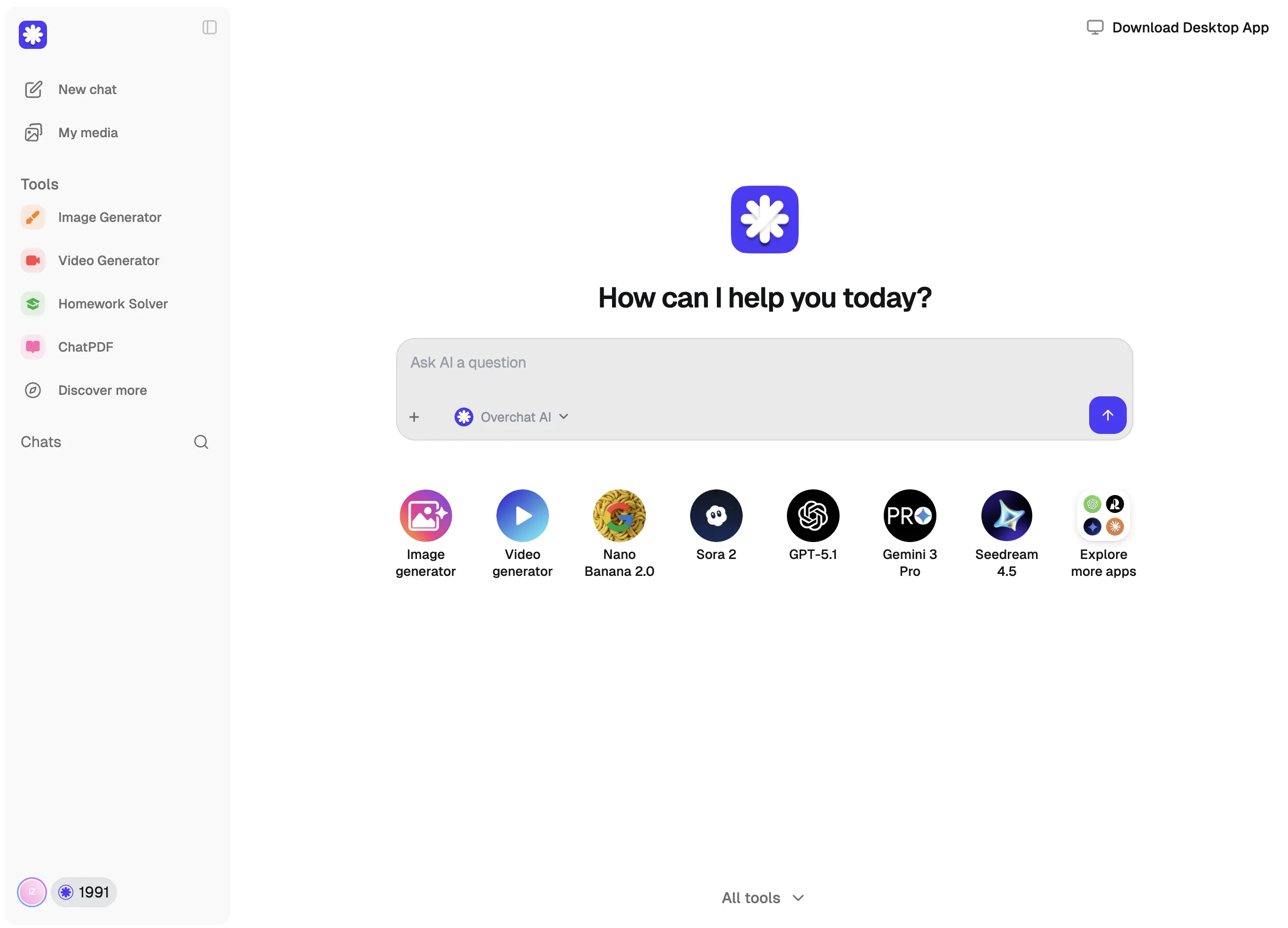

You can download and run Qwen 3.5 models on your own computer, but the flagship 397B model requires serious hardware. For most people, the easiest way to try Qwen 3.5 is through Overchat AI, which lets you use it instantly in your browser with no setup needed.

.webp)