What Is Private AI?

Private AI refers to any artificial intelligence system where your data never leaves your device. To make this happen, you install the entire AI modal to your machine, so it can process your input locally, and answer questions without sanding data to the cloud.

There are two levels to this.

- Enterprise level. At this level, private AI means deploying models on private cloud instances — essentially personal dataservers. Thankfully, this is not needed to run AI chatbots as a regular user.

- Personal level. At this level it means running private ai models directly on your laptop or desktop using open-source tools.

The terms get used loosely, so let's clarify. You might see confidential AI in enterprise contexts, which typically refers to AI running inside secure enclaves with hardware-level encryption.

What we're talking about here is simpler and more accessible: downloading an open-source model, running it on your computer, and chatting with it the same way you'd use ChatGPT.

In this article we’re going to talk about the personal side of things. Because in 2026 it’s become possible to run offline chatbots as a normal person quite easily.

Why Bother with Private AI?

In short — because your AI chats aren't as private as you think. To understand why this is, you need to understand how AI communication takes place. When you send a message to ChatGPT, Claude, or Gemini, here's what happens:

- Your input is transmitted over the internet to the provider's server

- It is processed by their model

- A response is sent back.

That interaction — your prompt and the output — is typically stored on their infrastructure.

The ChatGPT privacy situation is a good example.

By default, OpenAI retains your conversations and may use them to improve their models. Yes, even though you can opt out of training, your data is still transmitted, processed, and stored on their servers.

This creates several concrete risks.

- Any centralized store of user conversations is a target to hackers

- Cloud-stored data can be subject to subpoenas, government requests, or regulatory compliance obligations you never signed up for

- Insider access — employees at these companies may have some level of access to user data, even if policies restrict it.

These risks are very real and the most famous example of things going bad happened In early 2023, when Samsung engineers pasted proprietary source code and internal meeting notes into ChatGPT for help with debugging and summarization. That data was then ingested by OpenAI's systems and used to pre-trained other models, which remembered it and started disclosing it semi-randomly in chats with other users after they were released. It was a very fascinating story that resulted in a data breach, and prompted Samsung to subsequently ban employees from using external AI tools entirely — but the data was already gone.

Similarly, if you're using cloud AI for anything involving proprietary data, medical information, legal documents, financial records, or even just personal thoughts you'd rather keep private, you're trusting that provider's infrastructure, policies, and security with that information.

Benefits of Private AI

Let’s discuss the main benefits of Private AI:

Privacy. This is the core value proposition of a private LLM. Your prompts, documents, and outputs never leave your device, meaning that there are no API logs or conversation history that anybody can get access to because everything is stored locally on your machine.

You own your data.Data sovereignty is the concept of controlling where your information lives, and the most controlled palace for it to live in is your own device, which is where it will be when using private AI.

Works offline. Once you've downloaded a model, you don't need an internet connection to use it — The model runs entirely on your local hardware.

No subscriptions. AI subscriptions are very expensive. For example, ChatGPT Plus costs $20/month, Claude Pro is also $20/month, and if you want ot use models by different providers these get expensive quickly. With private AI, you download an open-source model once and use it forever — there's no recurring cost.

No censorship. Cloud AI providers apply sometimes ridiculously and extremely restrictive content policies that stop the models from answering harmless questions, often limiting real use cases. With a local model, you have full control over the system's behavior.

No rate limits. Cloud services throttle usage — message caps, tokens per minute, cooldown periods. When you run a private LLM locally, there are no artificial limits. You can generate as much as your hardware can handle, as fast as it can handle it.

All of these benefits sound great in theory — but they only matter if the setup is simple enough to actually use. Atomic Chat packages everything into a single desktop app: download it, pick a model, and you get all of the above out of the box. No terminal commands, no configuration files, no troubleshooting dependencies.

Private AI vs Cloud AI: Key Differences

Here's how private AI stacks up against cloud-based alternatives:

| Feature |

Private AI |

Cloud AI |

| Data location |

Your device |

Company servers |

| Internet required |

No |

Yes |

| Cost |

Free (open source) |

$20+/month subscription |

| Model quality |

Good (8B–70B parameters) |

Best (GPT-4o, Claude Opus) |

| Privacy |

Full |

Limited by provider policies |

You might be wondering, then, is there a serious limitation of private AI and is there even a point in using cloud AI chatbots?

Yes — for some cases, and that’s because cloud AI gives you access to the most powerful frontier models that most consumer setups can’t run. With private AI you can run only mid-sized models. That being said, even if you have just 8 or 16 GB of RAM, unified memory or VRAM, you’re in good shape to run a powerful local chatbot.

How to Set Up Your Own Private AI

Getting started with private AI is easier than you might expect, and here’s how:

1. Choose your private AI provider

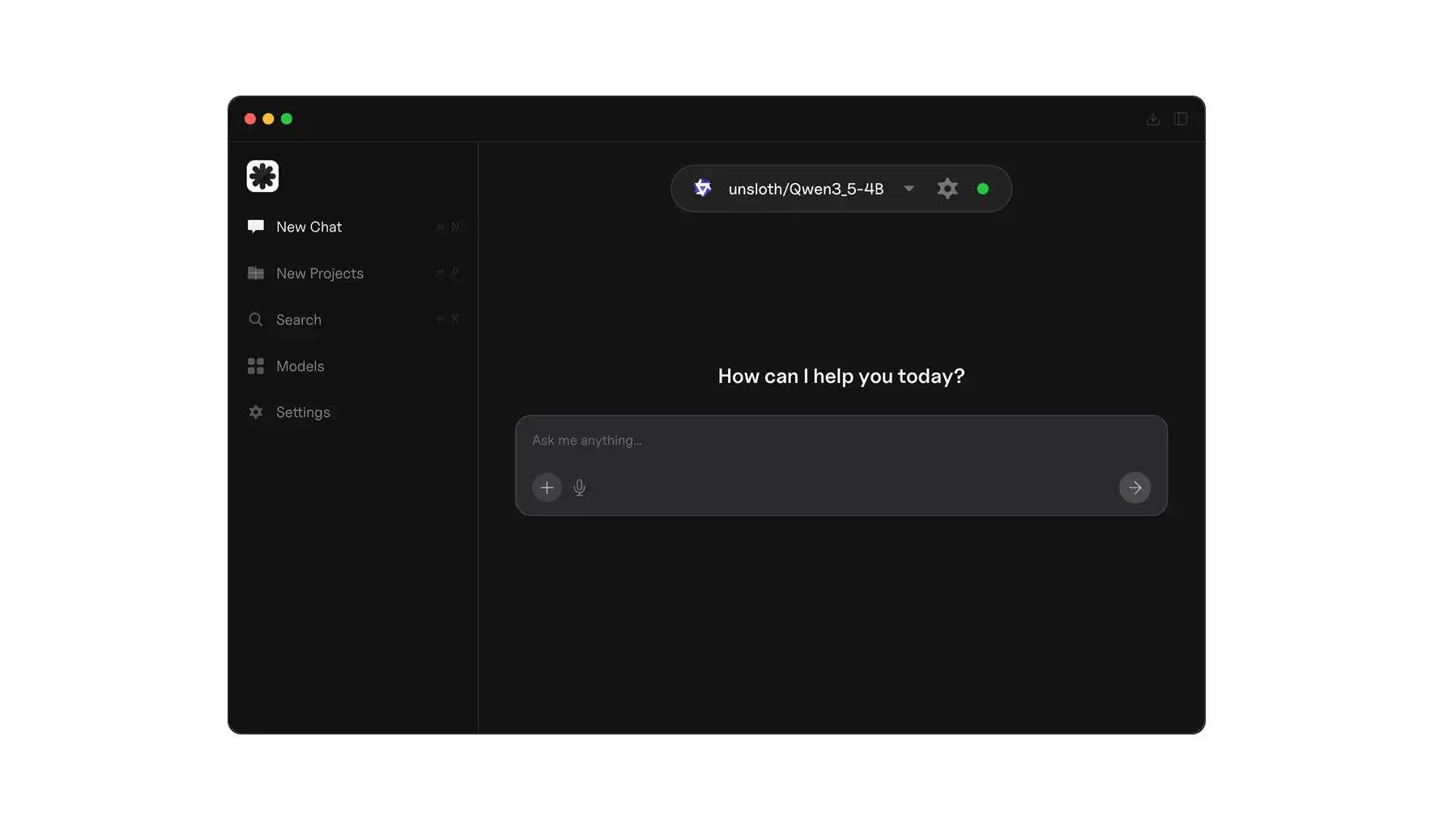

To get started, you need an application that will download the open-source AI model to your PC or MAC, install it, configure it, and give you a chat interface to communicate with it. The simplest option is Atomic Chat — it's designed for non-technical users and handles all setup automatically.

2. Download a model

There are many private LLM models, and the app will give you a list to choose from. If you’re unsure which model to start with, these are some of the best local AI models in 2026:

- Qwen3 8B is one of the most respected local LLMs — it supports both a thinking mode for complex reasoning and a fast mode for everyday chat, and runs comfortably in 8GB of RAM.

- Qwen3.5 9B is even newer, adding vision capabilities and a 256K context window. If you have 12GB of VRAM or more

- Gemma 4 from Google is another strong option, which came out in April 2026.

These models are genuinely capable for writing, coding, summarization, and Q&A — a massive leap from where open-source was even a year ago.

3. Start chatting

That's it. Open the app, select your model, and type a prompt. Everything runs on your machine.

FAQ

Is private AI as good as ChatGPT?

Yes — for about 80% of everyday tasks. If your AI chats mainly involve emails, summarizing text, brainstorming, answering questions — modern open-source models perform remarkably well. The gap between a local 8B model and GPT-5.4 is noticeable on complex reasoning, multi-step analysis, and specialized knowledge tasks. But for the work most people do with AI daily, a private model handles it comfortably.

Is private AI free?

Yes — it’s 100% free. The models are open-source and free to download, and so are the tools to run them (Atomic Chat, LM Studio, Ollama) are free.

Can I use private AI on my phone?

It's possible but limited. Apps like Private LLM run smaller models on iOS devices. Atomic Chat also has a mobile app that will be released soon. Just know that phone hardware restricts you to smaller models, so expect less capability than running on a laptop or desktop.

Do I need a powerful computer?

Not really. To put this in perspective, you need at least 8GB of RAM to run a 7B-parameter model, which will perform about on the level of GPT-4o. This is not as good as a modern flagship model by any stretch of imagination, but just a couple of years ago people called it like magic. But if you have 16GB is more comfortable this opens up even larger models. In short, most laptops made in the last 3–4 years can run a private AI setup.

Take Control of Your AI Privacy

If you care about where your data goes — and you should — private AI is the most direct solution available. Atomic Chat is the simplest way to run a private LLM on your own machine — download it, pick a model, and start chatting with complete privacy.