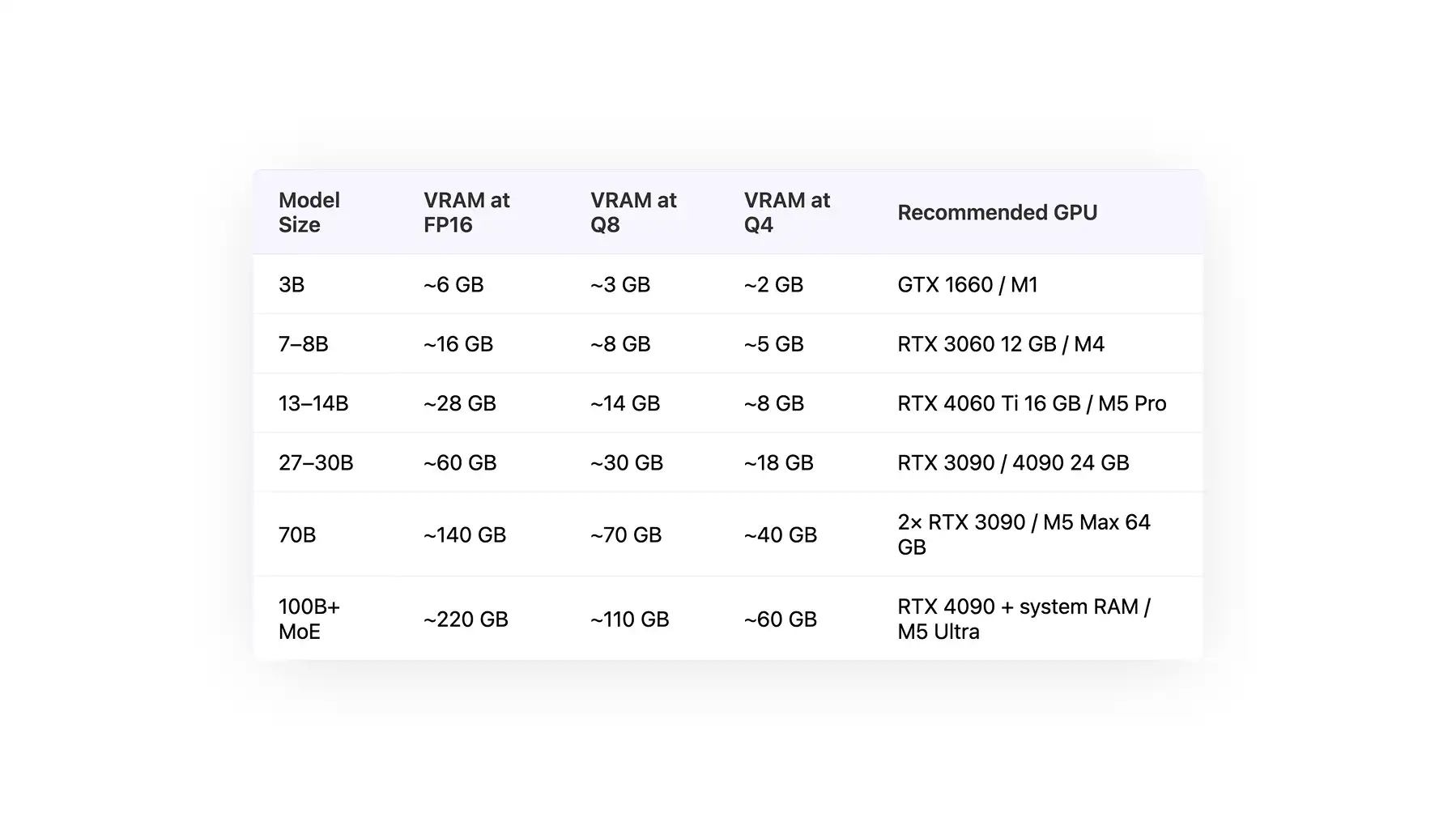

Local LLM Hardware Requirements Table

The table below shows the system requirements for running models of different sizes, ranging from 3B to 100B+ parameters.

| Model Size |

VRAM at FP16 |

VRAM at Q8 |

VRAM at Q4 |

Recommended GPU |

| 3B |

~6 GB |

~3 GB |

~2 GB |

GTX 1660 / M1 |

| 7–8B |

~16 GB |

~8 GB |

~5 GB |

RTX 3060 12 GB / M4 |

| 13–14B |

~28 GB |

~14 GB |

~8 GB |

RTX 4060 Ti 16 GB / M5 Pro |

| 27–30B |

~60 GB |

~30 GB |

~18 GB |

RTX 3090 / 4090 24 GB |

| 70B |

~140 GB |

~70 GB |

~40 GB |

2× RTX 3090 / M5 Max 64 GB |

| 100B+ MoE |

~220 GB |

~110 GB |

~60 GB |

RTX 4090 + system RAM / M5 Ultra |

While this is the general picture, let’s discuss in more detail how LLM inference affects different components of your system, why this is important, and how this translates into practice.

GPU and VRAM Requirements for a Local LLM

The table below shows the types of models that can be realistically run based on your GPU and the amount of available VRAM.

| GPU |

VRAM |

Supported Model Sizes (Q4 / Q8 / FP16) |

Example Models |

| NVIDIA GTX 1660 |

6 GB |

3B (FP16), 7B (Q4) |

LLaMA 3 3B, Mistral 7B (Q4) |

| NVIDIA RTX 3060 12GB |

12 GB |

7–8B (Q8), 13B (Q4) |

LLaMA 3 8B, Mistral 7B |

| NVIDIA RTX 4060 Ti 16GB |

16 GB |

13–14B (Q8), 30B (Q4 partial) |

LLaMA 3 13B, Mixtral (quantized) |

| NVIDIA RTX 3090 |

24 GB |

13B (FP16), 30B (Q4), 70B (offload) |

LLaMA 3 13B, Mixtral 8x7B |

| NVIDIA RTX 4090 |

24 GB |

13B (FP16), 30B (Q4), 70B (better offload) |

LLaMA 3 13B, Mixtral 8x7B |

| NVIDIA RTX 5090 |

32 GB |

30B (Q8), 70B (Q4) |

LLaMA 3 70B (Q4), DeepSeek models |

| 2× NVIDIA RTX 3090 |

48 GB total |

70B (Q4/Q8 split) |

LLaMA 3 70B |

| 2× NVIDIA RTX 4090 |

48 GB total |

70B (Q8), 100B MoE (Q4) |

Mixtral, DeepSeek MoE |

| NVIDIA H100 |

80 GB |

70B (FP16), 100B+ (Q8) |

LLaMA 3 70B, enterprise LLMs |

| Apple M5 Pro |

36–48 GB unified |

13B–30B (Q4–Q8) |

LLaMA 3 13B |

| Apple M5 Max |

64–96 GB unified |

70B (Q4), 30B (Q8/FP16) |

LLaMA 3 70B |

| Apple M5 Ultra |

128–192 GB unified |

100B+ MoE (Q4/Q8) |

Mixtral, DeepSeek MoE |

Here’s a quick explanation of how we calculated the above. One thing that’s important to understand is that VRAM is the biggest binding constraint for local LLM inference.

That’s because the model's weights have to sit in GPU memory for the GPU to compute on them.

If the entire weights don't fit, you either offload layers to system RAM (which is dramatically slower) or you can't run the model at all.

The baseline rule is ~2 GB of VRAM per 1B parameters at FP16. Quantization lowers that number in exchange for a small quality loss: Q8 roughly halves the VRAM requirement, and Q4 roughly quarters it.

This means that a 13B model that needs 28 GB at FP16 fits on a 16 GB card at Q8 and on an 8 GB card at Q4.

You also need to consider the KV cache. As the context window fills up, the model stores attention state for every token, and that state lives in VRAM alongside the weights. For long contexts, budget an extra 10–20% on top of the model size, or more if you're running 100K+ token prompts.

Consumer GPUs (RTX 3090, 4090, 5090) remain the sweet spot for local LLM hardware requirements in 2026 — 24 GB of VRAM at a fraction of the price of a data-center card.

Apple Silicon is the other viable path: M3, M4, and M5 Pro, Max, and Ultra chips use unified memory, which means any of your system RAM is usable as VRAM. An M5 Max with 64 GB can run models that would otherwise need an H100.

System RAM, CPU and Storage Requirements for a Local LLM

System RAM: The practical minimum for any useful local LLM work is 16 GB, and 32 GB is a much more comfortable starting point once you want to run 13B+ models.

CPU: if you have a GPU, the CPU barely matters — any modern 8-core chip is fine. It only becomes the bottleneck for CPU-only inference or for prompt processing on very long inputs.

Storage: an NVMe SSD is strongly recommended, because model files are big and you'll load them often. LLM Files often exceed 200 GB, so it’s a good idea to budget 100–500 GB of free space if you plan to use multiple models.

Hardware Requirements for Local LLM Inference by Model Size

Another approach is to take the model size as the starting point and then see what kind of models can realistically be run given your system constraints. Below, we explain the hardware required to run the different categories of model.

Small Local LLMs (3B–4B) — Entry Tier

Runs on almost any machine from the last three years, including CPU-only on a laptop.

- Examples: Gemma 4 E2B / E4B, Phi-4 Mini

- VRAM (Q4): 2–4 GB

- Works on: integrated graphics, GTX 1660, base M1, or CPU-only with 16 GB system RAM

Mid-Size Local LLMs (7B–14B) — The Sweet Spot

These models are fast enough for pleasant chat experience and small enough for mid-range hardware.

- Examples: Mistral Small 3, Qwen 3.5-9B, Phi-4 14B

- VRAM (Q4): 5–8 GB

- Works on: RTX 3060 12 GB, RTX 4060 Ti 16 GB, M5 Pro MacBook

Large Local LLMs (27B–70B) — Flagship Tier

These are the strongest general-purpose models that give you performance similar to cloud AI.

- Examples: Gemma 4 31B Dense, Qwen 3.5-27B, Llama 3.3 70B

- VRAM (Q4): 18 GB for 27–32B, 40 GB for 70B

- Works on: RTX 3090 or 4090 24 GB for the 27–32B range; dual 24 GB GPUs or an M3 Max with 64 GB for 70B

MoE Local LLMs (100B+) — Best of The Best

Mixture-of-Experts models have a large headline size but only activate a fraction of their parameters per token, so they run faster than the raw number suggests.

- Examples: Llama 4 Scout (109B / 17B active), Gemma 4 26B A4B, Qwen 3.5-122B-A10B

- VRAM (Q4): 24–60 GB depending on total size

- Works on: multi-GPU setups or an M5 Ultra

How to Run More Powerful Models on Your Hardware with Atomic Chat

The VRAM numbers above assume standard Q4 quantization. If your machine sits just below a tier — like 16 GB of VRAM, but you want to run a 27B model that would normally need 18 GB — you can still run this model with Atomic Chat, a free, open-source local AI app for Mac.

Atomic Chat ships with TurboQuant, a 3-bit quantization method that shrinks models further than Q4 without any additional quality drop, and it also compresses the KV cache by roughly 6×, which is the other big VRAM consumer at long context lengths.

In practice that means a model that would normally need 18 GB of VRAM can fit into closer to 12 GB, so the next tier up becomes reachable on the hardware you already have.

Local LLM Hardware Requirements on Mac vs Windows vs Linux

Mac (Apple Silicon): unified memory acts as VRAM, so an M2, M3, M4, or M5 Max with 64–128 GB can run models that would otherwise require a data-center GPU. MLX is the fastest runtime on Apple Silicon and usually supports new model releases on the day they come out.

Windows: the best-supported setup in 2026 is an NVIDIA GPU with CUDA. Atomic Chat, Ollama, LM Studio, and llama.cpp all ship native Windows builds, and NVIDIA's drivers are stable.

Linux: CUDA works the same way it does on Windows, and Linux also has the best support for AMD GPUs through ROCm. This makes it the best choice if you want to mix multiple GPUs in one machine or build a dedicated inference box.

Local LLM Hardware Requirements FAQ

What are the minimum hardware requirements for a local LLM?

The minimum requirements to run a capable local LLM model are: 16 GB of system RAM, a modern CPU, and either a GPU with 6+ GB of VRAM or an Apple Silicon Mac. That's enough for a 3B–7B model at Q4.

Do I need a GPU to run a local LLM?

No, but it helps. CPU-only inference works for small models (3B–7B) at acceptable speed. Anything larger becomes painfully slow without a GPU or Apple Silicon.

Is a MacBook good enough to run a local LLM?

Yes — modern MacBooks are actually some of the best systems for local AI. For example, an M5 Pro with 16–32 GB of unified memory can run models with 7–14 billion parameters, while an M5 Max with 64 GB or more can run models with 70 billion parameters that would otherwise require two 24 GB GPUs. This is because Apple's ARM architecture uses unified memory — if your MacBook comes with 48 GB of memory, this is equivalent to having two 24 GB VRAM GPUs.

How much RAM do I need for local LLM inference?

Ideally, you should have at least 16 GB of VRAM, but you will notice a significant improvement in performance if you have 32 GB or more, particularly if you want to run powerful models or keep multiple models active simultaneously.

Bottom Line

Local LLM hardware requirements in 2026 come down to one question: how much VRAM (or unified memory) do you have? Match that against the table at the top of this guide and you'll know which tier of models you can run.